Optical Character Recognition (OCR) is a technology that allows computers to recognize and extract text from images and scanned documents. It has been around for decades and has become an integral part of many industries, including business, education, and healthcare.

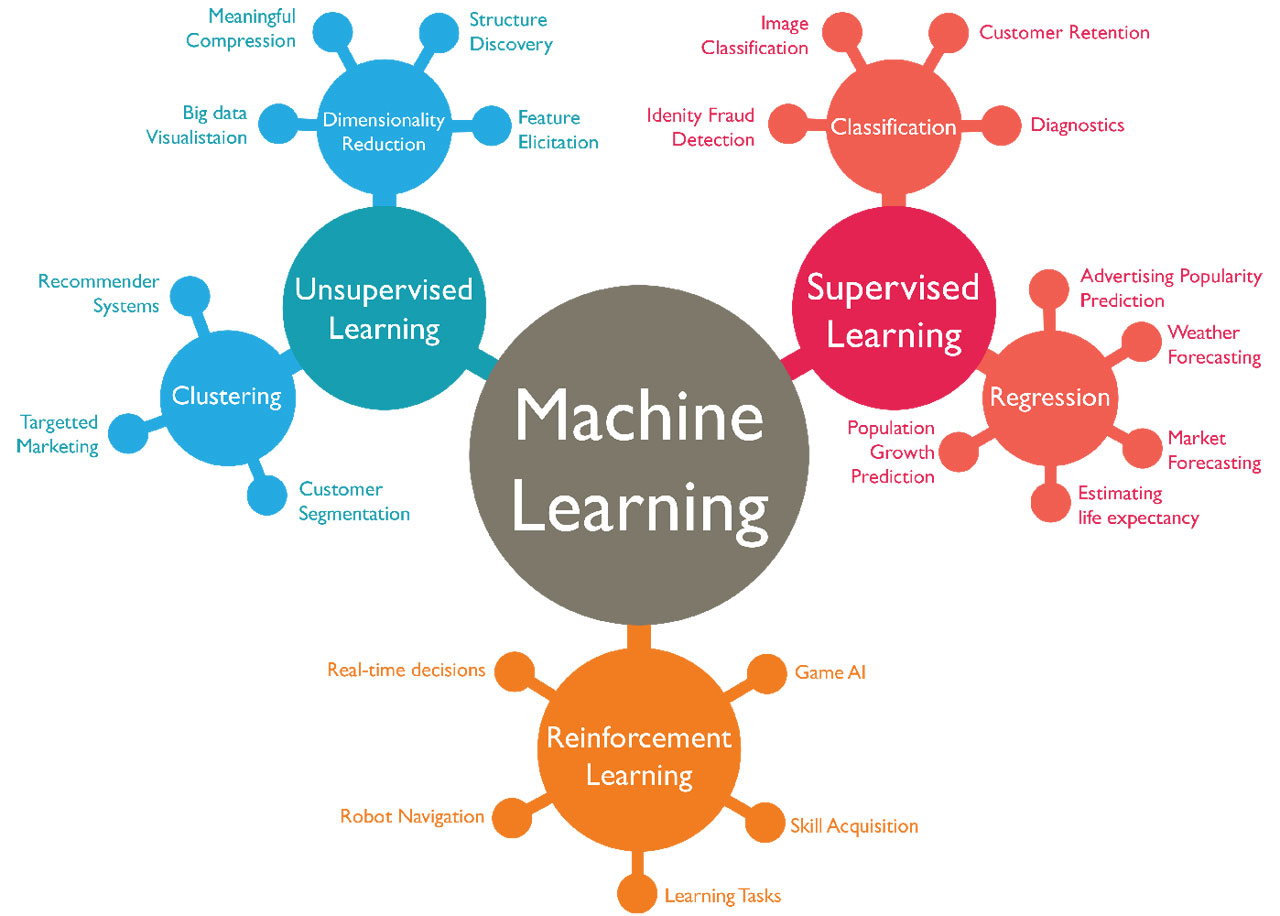

Machine learning, on the other hand, is a subset of artificial intelligence that allows computers to learn and improve their performance without explicit programming. It involves feeding a computer large amounts of data and allowing it to learn patterns and make decisions based on that data.

Both OCR and machine learning have become increasingly important in today’s world, as they enable organizations to automate processes OCR allows for the digitization and organization of physical documents, while machine learning can help businesses analyze data and make predictions about future trends.

In this article, we will explore the differences between OCR and machine learning, as well as the advantages and disadvantages of each technology. We will also discuss when to use OCR and when to use machine learning, and provide examples of their applications in different industries. So, it is important to understand which technology works best for a given situation.

OCR (Optical Character Recognition)

The concept of OCR can be traced back to the 1920s when a German engineer named Emanuel Goldberg invented the first OCR machine.

However, it was not until the 1960s that this technology began to be used widely, with the development of more advanced OCR software and hardware.

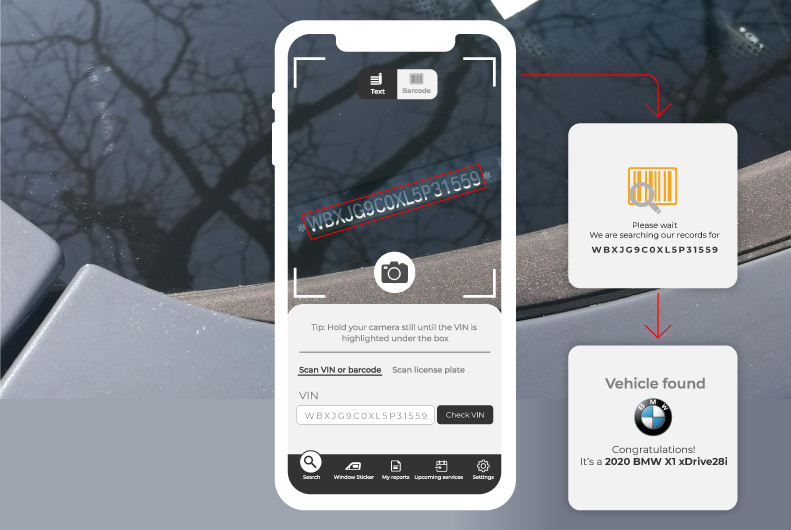

Today, OCR technology is used in a variety of applications, including document scanning, data entry, and indexing. It is also used in machine-readable passport scanners and automated teller machines (ATMs). OCR has made it possible to digitize and organize large volumes of paper documents, saving time and resources for businesses and other organizations.

In recent years, OCR technology has continued to evolve, with the development of more advanced algorithms and the use of machine learning to improve its accuracy and speed. Despite these advances, OCR still has its limitations and may not always be the best solution for extracting text from images and documents.

How OCR works

Optical Character Recognition (OCR) works by analyzing an image or scanned document and identifying the characters within it. Here’s a general overview of the OCR process:

- Preprocessing: The image or document is preprocessed to remove any noise or distortions that may interfere with the OCR process. This may include cropping the image, adjusting the contrast, and removing any background noise.

- Segmentation: The OCR translates the image into individual written characters or words. This step is important because it allows the OCR software to analyze each character or word separately.

- Recognition: The OCR software analyzes each character or word and compares it to a library of known characters or words. Based on this comparison, the OCR software assigns a label to the character or word.

- Postprocessing: The OCR software performs any necessary postprocessing, such as correcting errors or formatting the text.

Advantages of OCR

Optical Character Recognition (OCR) has several advantages, including

Increased efficiency: OCR allows the quick and accurate digitization of paper documents, reducing the need for manual data entry and improving the speed of business processes.

Reduced errors: OCR technology is able to accurately extract text from images and documents, reducing the risk of errors that can occur with manual data entry.

Improved organization: OCR allows for the easy organization and storage of digital documents, making it easier to find and access information.

Cost savings: OCR technology can help businesses save time and money by automating the process of digitizing and organizing paper documents.

Greater accessibility: OCR technology can make it easier for people with disabilities to access and read digital documents, as they can be easily converted into audio or braille formats.

Disadvantages of OCR

There are also some limitations and disadvantages to using Optical Character Recognition (OCR) technology, including:

- Accuracy: OCR technology may not always be 100% accurate, particularly when dealing with images or documents with low contrast or handwriting. This can lead to errors in the extracted text.

- Complex documents: OCR technology may struggle with more complex documents, such as those with tables or multiple columns, as it may have difficulty accurately segmenting and recognizing the text.

- Scanning quality: The quality of the scanned image or document can significantly impact the accuracy of OCR. If the image is blurry or distorted, the OCR process may be less accurate.

- Training: OCR software may need to be trained or fine-tuned to improve its accuracy, which can be time-consuming and require specialized expertise.

- Cost: OCR technology can be expensive to implement and maintain, particularly for organizations with large volumes of documents to be digitized.

Machine Learning

The concept of machine learning has been around for decades, but it has only recently gained widespread attention and adoption due to advances in technology and the availability of large amounts of data.

In the early days of machine learning, algorithms were largely hand-coded and required a lot of manual intervention. However, with the development of more advanced algorithms and the use of powerful computers and data storage systems, machine learning has become more automated and efficient.

Today, machine learning is used in a variety of applications, including image and speech recognition, natural language processing, and predictive analytics. It is also used in industries such as finance, healthcare, and retail to analyze data and make informed decisions.

How does Machine Learning work?

Machine learning involves training a computer to recognize patterns and make decisions based on data. Here’s a general overview of the machine-learning process:

- Data collection: The first step in machine learning is to gather a large amount of data that can be used to train the model. This data should be representative of the problem the model is trying to solve.

- Data preprocessing: After the data is collected, it goes through a process of cleaning and preparation for analysis. This may include removing any irrelevant or incomplete data, and normalizing the data so that it is in a consistent format.

- Model selection: The next step is to select an appropriate machine learning algorithm and model that can be trained on the data. There are many different algorithms to choose from, and the selection will depend on the problem being solved and the characteristics of the data.

- Training: The model is then trained on the data. This involves feeding the model the data and adjusting the model’s parameters to minimize error and maximize accuracy.

- Evaluation: Once the model is trained, it is evaluated on a separate set of data to see how well it performs. If the model performs poorly, it may be necessary to go back and adjust the model or select a different algorithm.

- Deployment: If the model performs well, it can be deployed and used to make predictions or decisions based on new data.

Advantages and Disadvantages of Machine Learning

There are several advantages to using machine learning, including

Improved accuracy: Machine learning algorithms can analyze large amounts of data and identify patterns that may not be immediately apparent to humans. This can lead to accurate predictions and decisions.

Increased efficiency: Machine learning can automate tasks and processes, saving time and resources for businesses and organizations.

Personalization: Machine learning can be used to tailor products and services to individual customers, improving the customer experience.

Scalability: Machine learning algorithms can handle large amounts of data and continue to improve their performance as they are fed more data. This makes them well-suited for tasks that would be too time-consuming or resource-intensive for humans to complete.

However, there are also some limitations and disadvantages to using machine learning, including

Complexity: Machine learning algorithms can be complex and require specialized expertise to implement and maintain.

Data quality: The quality of the data being fed into the model is important for the accuracy of the model. If the data is biased or incomplete, the model’s predictions may not be accurate.

Explainability: Some machine learning models are difficult to interpret, making it difficult to understand how they arrived at their predictions. This can be a problem in industries where explainability is important, such as healthcare and finance.

Ethical concerns: Machine learning algorithms can perpetuate and amplify existing biases in the data, leading to ethical concerns and potential negative consequences.

Overall, it is important to carefully consider the advantages and disadvantages of machine learning and to ensure that the technology is used ethically and responsibly.

OCR vs. Machine Learning

Optical Character Recognition (OCR) and machine learning are both important technologies that have a wide range of applications.

However, they are used for different purposes and have different strengths and limitations.

OCR is primarily used for the recognition and extraction of text from images and scanned documents. It is an essential tool for digitizing and organizing large volumes of paper documents and is commonly used in industries such as business, education, and healthcare.

Machine learning, on the other hand, is used for a wider range of tasks, including image and speech recognition, natural language processing, and predictive analytics. It is used to analyze data and make predictions or decisions based on patterns and trends identified in the data.

When to use OCR

OCR is best suited for tasks that involve the recognition and extraction of text from images and scanned

documents. It is a useful tool for digitizing and organizing paper documents and can be used in industries such as business, education, and healthcare.

When to use machine learning

Machine learning is best suited for tasks that involve the analysis and interpretation of large amounts of data. It is commonly used in industries such as finance, healthcare, and retail to make predictions and inform decisions.

Examples of applications of OCR

- Used in the automotive industry for license plate recognition, VIN scanning, and more

- Digitizing and organizing paper documents in a business or government organization

- Extracting text from scanned books or documents for use in education or research

- Scanning and processing medical records in a healthcare setting

Examples of applications of machine learning

- Image and speech recognition in devices such as smartphones and virtual assistants

- Natural language processing for chatbots and customer service applications

- Predictive analytics in finance to identify trends and inform investment decisions

- Analyzing patient data in healthcare to identify patterns and predict outcomes

Frequently Asked Questions

What is OCR, and how does it relate to machine learning?

(ocr machine learning) OCR, or Optical Character Recognition, is a technology that converts printed or handwritten text into machine-readable text. OCR can utilize machine learning techniques to improve its accuracy and recognition capabilities. Machine learning algorithms can be trained on large datasets of text and images to enhance OCR’s ability to recognize characters and words accurately.

Does OCR use machine learning?

Yes, OCR often leverages machine learning. Machine learning algorithms, such as neural networks, are commonly used in OCR systems to improve their accuracy in recognizing and extracting text from images or documents. These algorithms can adapt and learn from data, making OCR more effective, especially when dealing with complex or handwritten text. (ocr machine learning )

What is the difference between OCR and machine learning?

OCR and machine learning are related but serve different purposes. OCR is a specific technology focused on recognizing and extracting text from images or documents. In contrast, machine learning is a broader field that encompasses various algorithms and techniques for tasks such as pattern recognition, data analysis, and decision-making. OCR can benefit from machine learning by using it to improve text recognition capabilities.

When should I use OCR, and when should I use machine learning for text recognition?

OCR is the ideal choice when your primary goal is to extract text from images, scanned documents, or handwritten notes. It is a specialized tool designed for text recognition tasks. On the other hand, machine learning for text recognition is suitable when you need a more flexible and adaptable solution for recognizing text in diverse or unstructured data, such as social media content or user-generated text.

Can OCR and machine learning be combined for enhanced text recognition?

Yes, combining OCR with machine learning techniques can result in highly accurate and adaptable text recognition systems. OCR can be used as the initial step to extract text from images or documents, and then machine learning can further process and analyze the text for specific tasks, such as language translation, sentiment analysis, or data extraction. This combination can provide a powerful solution for various text-related applications.